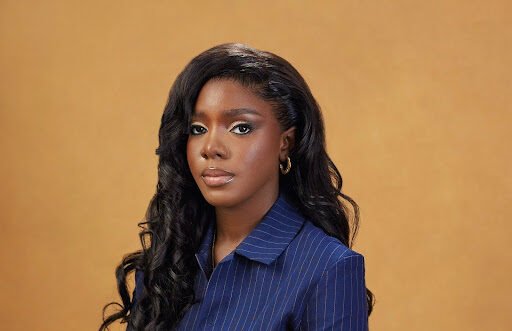

“In 2026, the real security story is that our systems are making more decisions about people than ever before, but too few of those systems were actually designed with people’s rights and dignity in mind,” says Modupe Akintan, a Privacy and AI Engineer at Amazon whose career sits on elite academic training and frontline governance of data‑driven technologies. It is an observation that captures the promise and the anxiety of an era in which autonomous agents, non‑human identities, and AI‑enabled platforms are rapidly outpacing the guardrails meant to keep them aligned with law and public expectations.

Modupe’s field has emerged as a kind of connective tissue between privacy engineering, cybersecurity, AI governance, and technology policy, seeking to answer a deceptively simple question: how should societies govern the systems that increasingly govern them? From research labs at Stanford to working groups at the Cloud Security Alliance and a privacy role at Amazon, Modupe has positioned herself squarely in that debate, helping translate emergent regulation and policy into technical and organizational controls that can actually run at scale.

From Classroom Prodigy to Security Researcher

Long before she was advising on AI governance, Modupe was known for her academic performance, which marked her out as a prodigy. In Nigeria, she completed a Bachelor of Engineering in Computer Engineering at Afe Babalola University, graduating with first‑class honors and top‑of‑class recognition, maintaining one of the highest grade point averages in her cohort. Local coverage and celebratory posts highlighted not only her grades but the load she carried: leadership positions, student representation, and editorial work layered atop a demanding technical curriculum.

Those years taught her to manage multiple responsibilities without losing sight of the details, a skill that would prove essential in a field where governance, engineering, and policy collide. A fully funded move to Stanford University followed, where she earned a Master of Science in Computer Science with a concentration in computer and network security, further formalizing her expertise in systems security, cryptography, and network defense. At Stanford’s Empirical Security Research Group, she evaluated the risk‑rating methodologies of third‑party risk management companies, probing how vendors score security posture and how those scores shape corporate decisions about who to trust.

That research focus now looks prescient. As organizations outsource key functions to cloud providers, AI vendors, and identity platforms, risk ratings and contractual assurances have become central to how boards understand their exposure. “You quickly realize that a lot of ‘trust’ in the system is really trust in somebody else’s model of risk,” she said. “If those models are wrong, entire sectors can be misled about how safe they actually are.”

Bridging Privacy Engineering and AI Governance

Today, Modupe describes her field of expertise as risk and privacy in data‑driven systems, a domain that deliberately spans disciplines. The work, as she defines it, involves identifying, assessing, and mitigating privacy, security, and societal risks that arise when AI‑enabled and data‑intensive systems are designed, deployed, and scaled. In practical terms, that means mapping data‑protection laws, AI governance requirements, and security standards onto concrete organizational controls that engineers and operations teams can implement.

The agenda is broad: third‑party risk, automated decision‑making, emerging AI harms, and systemic vulnerabilities in vendor ecosystems all fall under the remit. In working groups such as the Cloud Security Alliance’s AI Safety and Data Privacy Engineering effort, Modupe contributes to guidance on privacy‑by‑design across the machine‑learning lifecycle, helping define how data should be collected, labeled, stored, and used in ways that are both compliant and protective of user interests.

Inside the New Identity Frontier

If there is a theme that links many of Modupe’s current concerns, it is the transformation of identity in the age of AI. Industry analyses describe a “triple threat” for 2026: the rise of agentic AI, gaps in governance frameworks, and a proliferation of non‑human identities. Where traditional security is built around the human user as the primary subject, new systems must manage fleets of AI agents, automated workflows, and service accounts, many of which have privileges that exceed those of their human creators.

For privacy and risk specialists like Modupe, that shift is more than a technical challenge. “When an AI agent can log into systems, move data, and make decisions, the line between ‘user’ and ‘tool’ starts to blur,” she says. “If we don’t design for that from the start, then we’re building a world where no one is truly in charge of the data flows that shape people’s lives.”

High-Level Leadership in Big Tech

Within Amazon, Modupe works on privacy, AI governance, and risk management for data‑driven systems in a role that, by design, sits close to regulatory expectations without disclosing internal mechanics. Her remit is to translate high‑level compliance obligations into practical guidance that product, operations, and engineering teams can apply without compromising the confidentiality of internal tools or infrastructure.

The position crowns a sequence of roles that have exposed her to privacy questions from multiple vantage points. At Apple, she worked on the User Privacy Engineering team, embedding privacy considerations in consumer‑facing products. At PwC Nigeria, she advised clients on cybersecurity and privacy controls as African regulators expanded data‑protection regimes. Across those roles, a consistent theme has been the need to build bridges between legal obligations, internal risk appetites, and the concrete realities of engineering and operations.

Policy Fellowships and Public-Interest Governance

Unlike many engineers, Modupe has invested heavily in the policy side of her field. As Director of Partnerships at the Paragon Policy Fellowship, she helped scope applied technology‑policy projects and collaborate with government partners, building a network that spans regulators, researchers, and practitioners. She is a Fellow of CHAIRES, an initiative that convenes experts around AI, human rights, and emerging technologies, and a member of the Center for AI and Digital Policy’s research group through its AI Policy Clinic, which supports evidence‑based recommendations on AI governance.

Her contributions extend into academic and professional service: she has served on the program committee for the 41st IFIP SEC conference, reviewed for IEEE’s Intelligent and Autonomous Transportation and Mobility Systems Initiative, and contributed to the IEEE Tech Forum on Societal Harms in 2024, which examined how digital systems can amplify bias, misinformation, and other harms at scale. She has also judged early‑stage projects at Vibe Demo Day, hosted by Venture Miner Academy with support from the Artizen Fund, bringing a privacy and risk lens to emerging technologies. “The question I keep asking in those spaces is: what happens to the most vulnerable person who touches this system?” she says. “If we can’t answer that convincingly, we’re not done designing.”

What the Honors Are For

Asked what it means to carry elite academic honors and industry recognition into a field defined by invisible labor and incremental safeguards, Modupe answers with characteristic precision. “The honors matter only if they raise the standard you hold yourself to,” she says. “If you’ve been given access to world‑class training, to policy tables, to big‑tech resources, then you don’t get to pretend that the harms are too abstract or too hard to see.”

For her, the point of turning academic distinction into industry‑shaping privacy leadership is not prestige but responsibility. “If ten years from now people can live and work in AI‑driven systems without feeling constantly observed, profiled or excluded, it won’t be because the technology magically became ‘ethical,’” she reflects. “It will be because enough of us used every credential and every opportunity we had to insist that risk and privacy in data‑driven systems were not side notes, but the core design problem of our time.”